AI in Vertec: the new LLM Client in Python 3

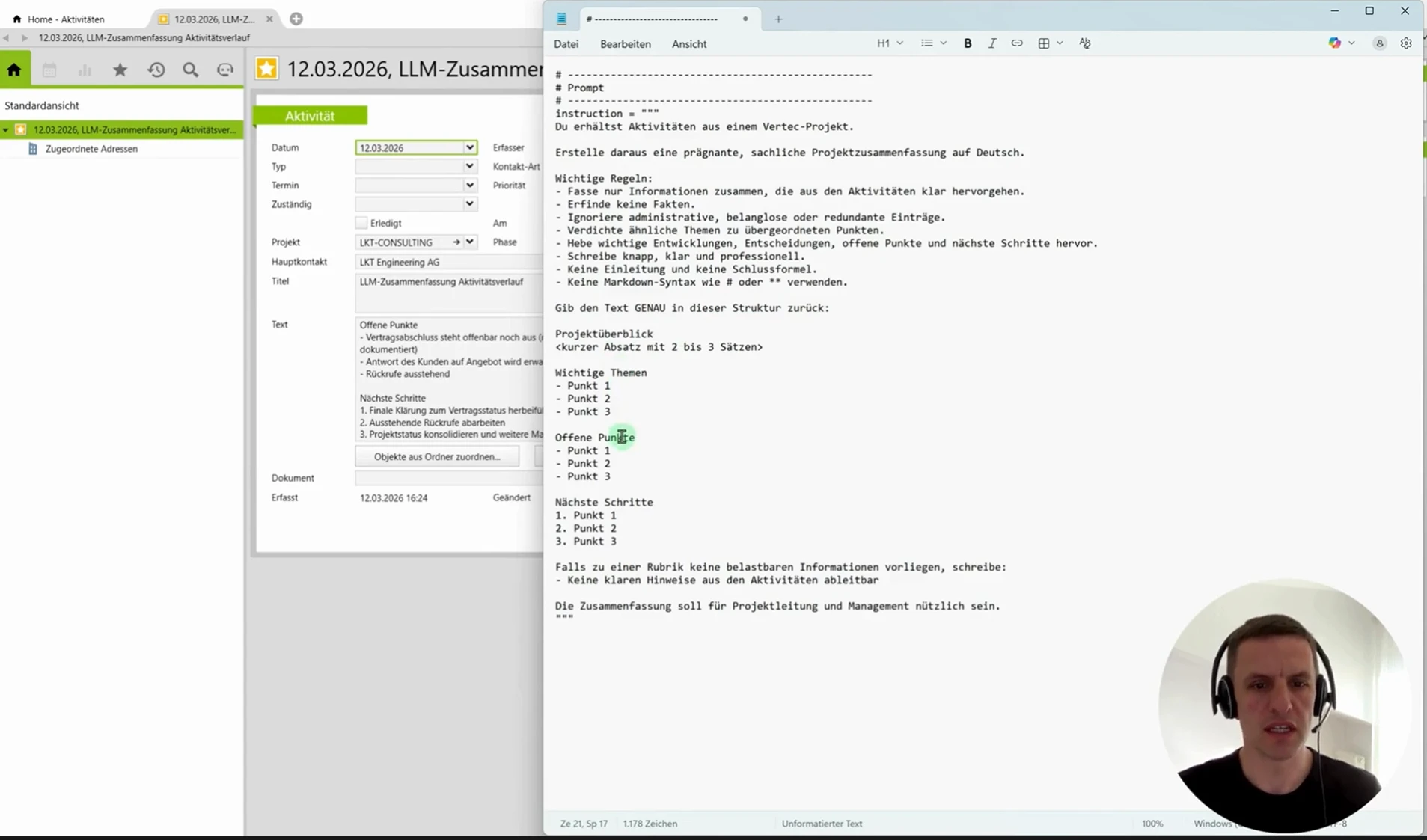

The new LLM client in Python 3 opens up attractive AI use cases in Vertec. With the implementation of Python version 3, it is now possible to use a generic LLM client via script. The KB article explains the usage in detail.

The prerequisites are very simple. With Vertec version 6.8.0.17 or higher and an API key from OpenAI, Anthropic or Google you can get started straight away. In the video we show three different use cases from the areas invoice, activities and project status.

For the three examples and in general, the following applies: Adjustment to the prompt, which is embedded in the script, can be used to control the AI response. Structuring, length, focus – tell the AI in natural language what should be returned. In the script, you also control which data is sent to the LLM. Activities, services, expenses etc.

What use cases do you implement with the new LLM Client? We look forward to your feedback in the Vertec Forum.